Фёдоров Борис Сергеевич

Great news:

Added -Interactive login option to Connect-PnPOnline which is similar to -UseWebLogin but without the limitations of the latter. The -UseWebLogin is using cookie based authentication towards SharePoint and cannot access Graph tokens. Using -Interactive we use Azure AD Authentication and as a result we are able to acquire Graph tokens.

more changes: https://github.com/pnp/powershell/releases/tag/1.3.0

OnNew:

Set(SharePointFormMode, “NewForm”); NewForm(formNew); Navigate(screenNew, ScreenTransition.None)

OnEdit:

Set(SharePointFormMode, “EditForm”); EditForm(formEdit); Navigate(screenEdit, ScreenTransition.None)

OnView:

Set(SharePointFormMode, “ViewForm”); ViewForm(formView); Navigate(screenView, ScreenTransition.None)

OnSave – If(SharePointFormMode=”CreateForm”, SubmitForm(CreateItemForm), If(SharePointFormMode=”EditForm”, SubmitForm(EditItemForm)))

OnCancel – If(SharePointFormMode=”CreateForm”, ResetForm(CreateItemForm), If(SharePointFormMode=”EditForm”, ResetForm(EditItemForm)))

You run some PnP PowerShell code unattended e.g. daemon/service app, background job – under application permissions – with no user interaction.

Your app needs to connect to SharePoint and/or Microsoft Graph API. Your organization require authentication with a certificate (no secrets). You want certificate stored securely in Azure Key Vault.

Here is the sample PowerShell code to get certificate from Azure Key Vault and Connect to SharePoint with PnP (Connect-PnPOnline):

# ensure you use PowerShell 7

$PSVersionTable

# connect to your Azure subscription

Connect-AzAccount -Subscription "<subscription id>" -Tenant "<tenant id>"

Get-AzSubscription | fl

Get-AzContext

# Specify Key Vault Name and Certificate Name

$VaultName = "<azure key vault name>"

$certName = "certificate name as it stored in key vault"

# Get certificate stored in KeyVault (Yes, get it as SECRET)

$secret = Get-AzKeyVaultSecret -VaultName $vaultName -Name $certName

$secretValueText = ($secret.SecretValue | ConvertFrom-SecureString -AsPlainText )

# connect to PnP

$tenant = "contoso.onmicrosoft.com" # or tenant Id

$siteUrl = "https://contoso.sharepoint.com"

$clientID = "<App (client) Id>" # Azure Registered App with the same certificate and API permissions configured

Connect-PnPOnline -Url $siteUrl -ClientId $clientID -Tenant $tenant -CertificateBase64Encoded $secretValueText

Get-PnPSite

The same PowerShell code in GitHub: Connect-PnPOnline-with-certificate.ps1

References:

I love it:

Update: Private content mode will stop working on June 30, 2025.

Microsoft announced Private content mode retirement in Microsoft Viva Engage (Yammer).

Message ID MC1045211.

As an Office 365 administrator, I would like to get some reports on Yammer with PowerShell. How it’s done?

Patrick Lamber wrote a good article here: “Access the Yammer REST API through PowerShell“. The only I would add (important!) is:

By default, even with a Verified Admin token, you do not have access to private messages and private groups content.

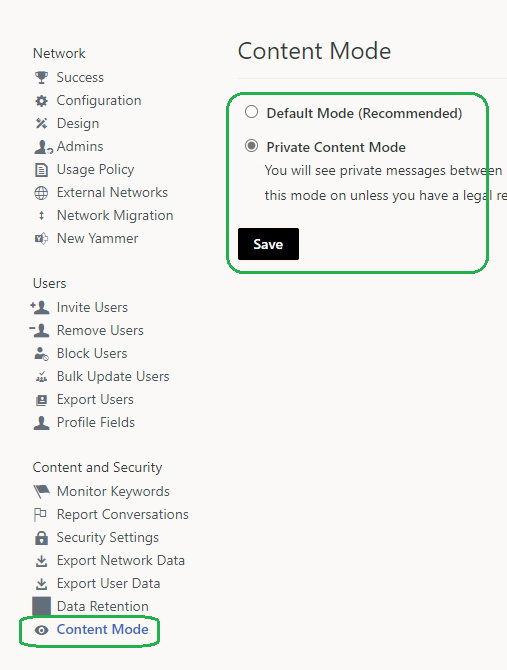

To get private stuff, you need select “Private Content Mode” under Yammer Admin Center -> Content and Security -> Content Mode:

Check Microsoft: “Monitor private content in Yammer” and

Yammer: “Verified Admin Private Content Mode“

If you do not have “Private Content Mode” set up, you might see some weird “Invoke-WebRequest” errors like:

dotnet --info dotnet new sln dotnet new webapi -o API dotnet sln add APi dotnet watch run dotnet dev-certs https --trust dotnet new gitignore

Some useful plug-ins:

VS Code basic configuration:

– AutoSave

– Font Size

– Hide folders: Settings->Exclude “**/obj” “**/bin”

– Compact folders: Settings->”Compact folders”

– appsettings.Development.json: “Microsoft”: “Warning” -> “Microsoft”: “Information”

– launchSettings.json:

“launchBrowser”: true/false,

“launchUrl”

git init

create gitignore (dotnet new gitignore)

add appsettings.json to gitignore

git commit -m "first commit"

git branch -M main

git remote add origin https://github.com/orgName/AppName.git

git push -u origin main

git config --global credential.helper wincred

git config --global user.name "<John Doe>"

git config --global user.email <johndoe@example.com>Can I use PowerShell 7 “-Parallel” option against SharePoint list items with PnP.PowerShell? Can I run something like:

$items | ForEach-Object -Parallel {

$listItem = Set-PnPListItem -List "LargeList" -Identity $_ -Values @{"Number" = $(Get-Random -Minimum 100 -Maximum 200 ) }

} Yes, sure… But! Since it’s a cloud operation against Microsoft 365 – you will be throttled if you start more than 2 parallel threads! Using just 2 threads does not provide significant performance improvements.

So, try PnP.PowerShell batches instead. When you use batching, number of requests to the server are much lower. Consider something like:

$batch = New-PnPBatch

1..100 | ForEach-Object{ Add-PnPListItem -List "ItemTest" -Values @{"Title"="Test Item Batched $_"} -Batch $batch }

Invoke-PnPBatch -Batch $batchAdding and setting 100 items with “Add-PnPListItem” and “Set-PnPListItem” in a large (more than 5000 items ) SharePoint list measurements:

| Add-PnPListItem Time per item, seconds | Set-PnPListItem Time per item, seconds | |

| Regular, without batching | 1.26 | 1.55 |

| Using batches (New-PnPBatch) | 0.10 | 0.80 |

| Using “Parallel” option, with ThrottleLimit 2 | 0.69 | 0.79 |

| Using “Parallel” option, with ThrottleLimit 3 | 0.44 (fails level: ~4/100) | 0.53 (fails level: ~3/100) |

Adding items with PnP.PowerShell batching is much faster than without batching.

More:

(WIP)

PowerShell is our best friend when it comes to ad-hoc and/or scheduled reports in Microsoft 365. PnP team is doing great job providing more and more functionality with PnP PowerShell module for Office 365 SharePoint and Teams.

Small and medium business organizations are mostly good, but for large companies it might be a problem due to just huge amount of data stored in SharePoint. PowerShell reports on all users or all sites might run days… which is probably OK if you run this report once, but totally not acceptable if you need this report e.g. daily/weekly or on-demand.

How can we make heavy PowerShell scripts run faster?

Of course, you start with logic (algorithm) and leveraging full PowerShell functionality (e.g. PowerShell 7 parallelism or PnP batching).

(examples)

What if you did everything, but it still takes too long? You need something like brute force – the closer your code runs to your tenant – the better.

What are the option?

– Automation account runbook (+workflow)

– Azure Function Apps

– Azure VM in the region closest to your Tenant

Seemed like a good option, but not something Microsoft promotes. Even opposite – automation accounts support only PowerShell 5 (not 7), no plug-ins for VS Code and recently there were messages on some retirement or smth.

Meantime, I tested it – and did not find any significant increasing in speed. In a nutshell, what is behind this service? Same windows machines running somewhere in Azure .

TBC

References

– PnP PowerShell

Quick answer: spin-up a few VMs in different Azure regions, then ping your SharePoint tenant. The moment you see 1ms ping you know the tenant exact location.

Full story

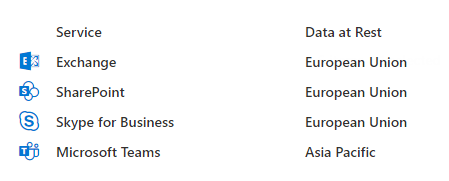

Microsoft says: “Customers should view tenant specific data location information in your Microsoft 365 Admin Center in Settings | Org settings | Organization Profile | Data location.”

And it might look like:

That’s accurate to the geography (e.g. US, UE, AP), but not to the region (for instance – “Central US”, “UK West” or “Australia Southeast”).

In other words, If you know your data are in the US, you do not know where exactly – East/West/Central or South US.

Meantime when you create an Azure resource (e.g. Virtual Machine) – you can select specific region.

Can we just ping the tenant, analyze result and find Office 365 tenant region?

Luckily, SharePoint tenant is pinging with just

PS>ping tenantName.SharePoint.com

I have tested 5 regions and 4 different tenants:

| ping from/to (ms) | tenant 1 (US) | tenant 2 (EU) | tenant 3 (US) | tenant 4 (US) |

| North Europe | 73 | 17 | 96 | 101 |

| East US | 1 | 83 | 39 | 31 |

| Central US | 22 | 114 | 23 | 23 |

| West US | 63 | 146 | 36 | 33 |

| South Central US | 31 | 112 | 1 | 1 |

So I figured it out:

My o365 tenants #3 and #4 regions are South Central US (Texas, San Antonio),

tenant #1 resides in East US.

Imagine you are running heavy reports against your tenant.

So probably you want your code running as close as possible to your tenant.

For this, you can spin-up a VM in Azure or use Azure Functions – just select proper region 🙂

(please check also “Long-running PowerShell reports optimization in Office 365“)

References:

– Where your Microsoft 365 customer data is stored